c:v h264_vaapi -qp 1 -compression_level 2 \ vf 'hwupload,scale_vaapi=format=nv12' -c:v h264_vaapi -qp 1 -compression_level 2 \Ĭapture.mkv Capture (screen + audio) ffmpeg -hide_banner \ hwaccel vaapi -vaapi_device /dev/dri/renderD128 \

However, the following will do a reasonable job, although will likely produce larger file sizes: Capture (screen only) ffmpeg -hide_banner \ There is no true lossless mode, and no true CRF mode. This does improve encoder speed a bit, but has limited features. Requires libva-intel-driver package for i915 chipsets. The results are just better, and more consistent assuming you have a reasonable CPU and the time to wait. I get the impression that CPU-only is the preferred mechanism - even if you have hardware-acceleration capabilities. The captured audio is glitchy, there is no way to capture losslessly, and the speed gain on older hardware is negligible. Note that I have pretty much given up on accelerated capture/processing. Lossless H.264 video, and 16bit PCM (WAV) audio: ffmpeg -hide_banner \Ĭapture.mkv Hardware accelerated capture via VAAPI (Linux) MP4 does not allow for any lossless audio formats. We are using Matroska as the container since it is by far the most flexible of the containers. To find a recording device's ID: pactl list sources Screen and audio Under Linux, PulseAudio seems to be the best bet for configuring multiple audio devices. The value 20M was determined experimentally - values lower than this generate a warning. The -probesize is there to give the encoder enough initial data to allow it to analyse the input streams. It is the -crf 0 option that makes the capture lossless. Using MP4 as the container seems to be faster, so for high-res screen-only demos, this is the best choice. c:v libx264rgb -crf 0 -preset ultrafast \ Lossless H.264 video: ffmpeg -hide_banner \ The capture file will be largish (around 20MB per minute), and will require some further encoding once the capture/editing is completed to reduce the file sizes. It is also one of the best supported codecs for compatibility. Of the lossless codecs tested it had the best combination of file size (way smaller than HuffYUV) and speed (can mostly keep up with the capture even on my old laptop). Some quick testing suggests H.264 in lossless mode seems to be the the best option.

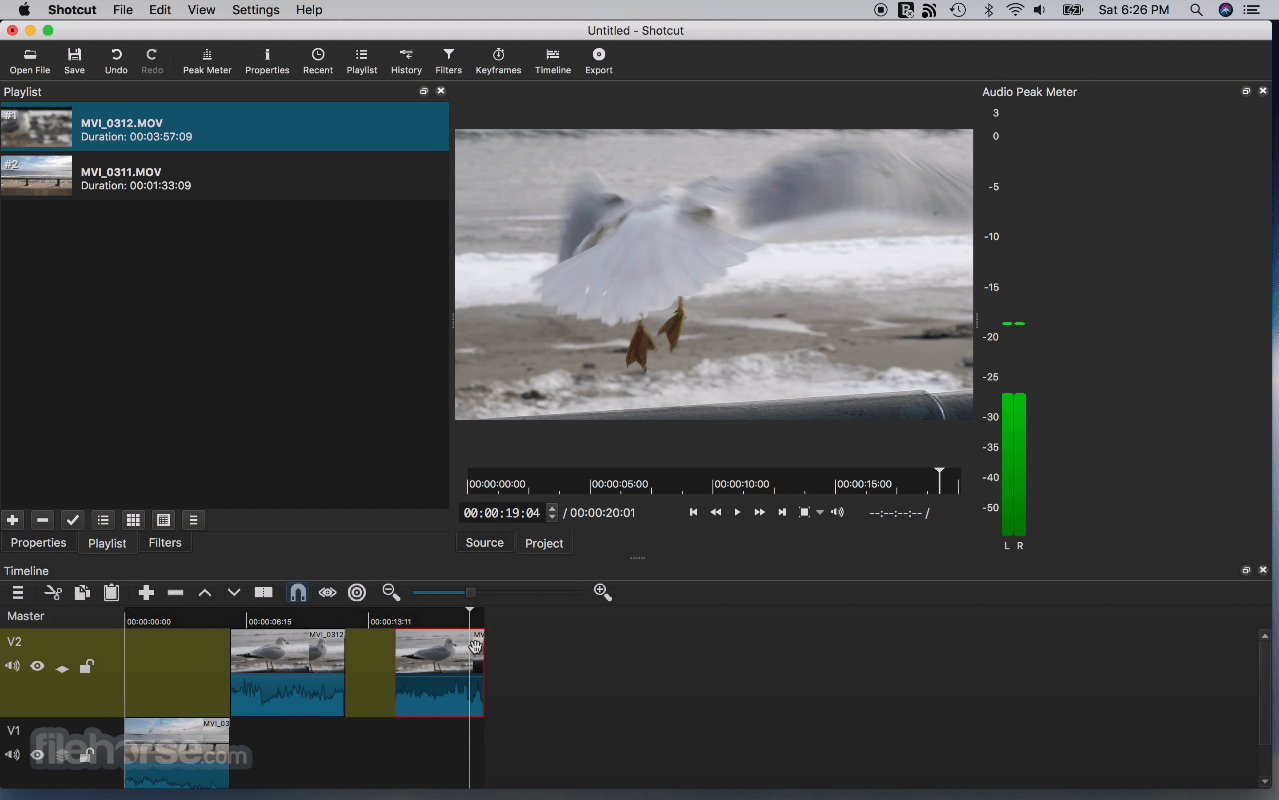

The aim here is to capture at the highest quality possible with minimal CPU usage (so that the capture can keep up without dropping frames, and to leave some head room for other processes). Verify that the compressed versions are good, and if so, delete the original captures to save some disk space.These files can be uploaded to echo360 if needed - they accept these formats. Compress all source and intermediate files that you want to keep into either FLAC (audio only), or H.265 + FLAC + Matroska using maximum lossless compression.Transcode the result to the desired output format if necessary (for things like Blackboard).I have seen Shotcut output large files that are entirely black with no audio which is kind of impressive, and also annoying.

Export the result using a lossless preset.Add both streams (using the processed audio stream) to a Shotcut project and perform any cutting/editing that is necessary.Clean up the audio with Audacity, and export the result as a WAV.Separate the audio and video streams into different files.Pause (yourself, not the recording) for a second at section/slide boundaries to make it easier to cut and replace sections/slides. Using FFplay to display the difference between two videos.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed